The transition from simple chat interfaces to autonomous agents in the software development lifecycle marks a massive shift in how modern companies build products. While these agents promise to handle the heavy lifting of coding, testing, and debugging, they also introduce a chaotic new variable into the enterprise ecosystem. Without proper guardrails, an agent tasked with optimizing a database might inadvertently expose sensitive credentials or introduce a logic flaw that compromises the entire system. This is why ai code security has moved from a niche concern to a primary requirement for any organization looking to integrate generative AI into their production pipelines.

The Growing Tension Between Autonomy and Oversight

In the early days of the generative AI boom, the focus was almost entirely on model intelligence. Developers wanted to know which LLM could write the most elegant Python script or the most efficient SQL query. However, as we move into the era of agentic workflows—where AI doesn’t just suggest code but actually executes multi-step tasks—the conversation has fundamentally changed. It is no longer just about how smart the model is, but how much control we have over its actions.

When an AI agent operates within a sandbox, it is relatively safe. It can experiment, fail, and crash without impacting the real world. The danger arises when these agents are granted access to real-time data, internal repositories, and deployment pipelines. A pilot program might show incredible results in a controlled environment, but once that same agent is connected to a live enterprise environment, the stakes skyrocket. This is where the concept of orchestration failure becomes critical. An orchestration failure occurs when the sequence of tasks assigned to an AI goes off the rails, leading to unintended consequences that no single model could have predicted.

The industry is currently witnessing a split in philosophy. On one side, there is a push for maximum autonomy, where agents are given broad permissions to solve complex problems with minimal human interference. On the other side, there is a growing demand for governance, where every action taken by an AI is logged, validated, and, most importantly, approved by a human. This divide is shaping the next generation of developer tools, as companies realize that “oops” is not an acceptable response when a security breach occurs.

How IBM Bob Addresses ai code security Through Multi-Model Routing

IBM recently entered this fray with the global launch of Bob, an AI-powered software development platform. Unlike tools that simply provide a smarter autocomplete, Bob is designed to manage the entire lifecycle of code creation and testing. What makes Bob particularly interesting from a security and reliability perspective is its reliance on multi-model routing and structured checkpoints.

Instead of tethering an entire enterprise to a single LLM, Bob allows developers to leverage different models for different tasks. It supports a curated selection of high-performing models, including IBM’s own Granite series, Anthropic’s Claude, and Mistral. By routing specific tasks to the most appropriate model, the system can optimize for both performance and safety. For instance, a highly sensitive task involving architectural logic might be routed to a more robust, heavily guarded model, while a routine documentation task might use a faster, more distilled model.

This multi-model approach is a cornerstone of modern ai code security. It prevents a single point of failure. If one model exhibits unexpected behavior or a specific vulnerability is discovered in a particular model’s training data, the routing layer can act as a filter, ensuring that critical code paths are handled by the most trusted and audited engines available. This creates a layered defense that is much harder to penetrate than a single-model system.

1. Implementing Human-in-the-Loop Checkpoints

One of the most significant risks with autonomous agents is the “black box” problem, where a developer has no idea how an agent arrived at a specific piece of code. Bob mitigates this by introducing a structured layer of human-led checkpoints. Instead of allowing an agent to run from start to finish in total isolation, the platform forces the agent to pause at critical junctures.

To implement this effectively, organizations should define specific “gates” within their development workflow. For example, an agent might be allowed to write a function autonomously, but it must stop and request human approval before that function is integrated into the main branch or before it is allowed to run a test suite against production-like data. This ensures that a human eye is always present to verify the logic and security of the code, filling the audit gaps that often plague fully autonomous systems.

2. Utilizing Multi-Model Redundancy for Validation

A powerful way to enhance security is to use one model to write code and a completely different model to audit it. This is a concept known as cross-model verification. Because different LLMs are trained on different datasets and have different architectural biases, they are unlikely to make the exact same logical error.

In a sophisticated workflow, you might use a highly creative model to generate a complex algorithm, but then route that output to a more “conservative” or “reasoning-heavy” model specifically tasked with looking for vulnerabilities. This second model acts as a digital peer reviewer. If the two models disagree on the validity or safety of the code, the system can automatically flag the discrepancy for a human developer to investigate. This significantly reduces the likelihood of a subtle security flaw slipping through the cracks.

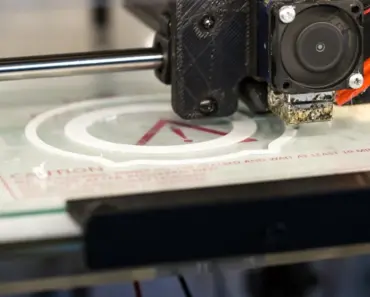

3. Sandboxing and Environment Isolation

Security in AI development is not just about the code itself, but about where that code is allowed to run. This is why technologies like Nvidia’s NemoClaw and Kilo’s Kilo Claw have become so relevant. These tools essentially build a “fence” around the sandbox environment where autonomous agents operate.

For any enterprise implementing AI agents, sandboxing is non-negotiable. A sandbox is an isolated computing environment that mimics the real production environment but has no actual access to sensitive data or external networks unless explicitly permitted. By forcing agents to work within these highly controlled containers, you ensure that even if an agent generates malicious or broken code, the impact is contained. The code can crash the sandbox, but it cannot crash your company.

4. Role-Based Access Control for Agentic Tasks

Not all AI agents should have the same level of authority. Just as a human junior developer has different permissions than a senior DevOps engineer, AI agents should operate under a strict principle of least privilege. This is where role-based stages become vital.

When configuring your AI orchestration layer, you should assign specific “roles” to your agents. An “Explorer Agent” might have permission to read documentation and suggest code snippets but zero permission to execute any commands. A “Tester Agent” might be allowed to run code within a sandbox but not allowed to access the internet. By segmenting permissions, you limit the blast radius of any single agent that might behave unexpectedly. This structured approach allows for predictable execution and makes it much easier to track which agent performed which action.

You may also enjoy reading: Why Half of Gen Z Would Rather Live in the Past.

5. Continuous Audit Logging and Traceability

In a traditional development environment, we have Git logs to show who changed what and when. In an AI-driven environment, we need something much more granular. We need to know not just what code was changed, but what prompt was used, which model generated the response, and what the intermediate reasoning steps were.

To maintain high standards of ai code security, every interaction between a human and an agent, and every interaction between an agent and the codebase, must be logged in an immutable audit trail. This traceability is essential for debugging complex agentic failures and for compliance with industry regulations. If a security incident occurs, a detailed log allows investigators to reconstruct the exact sequence of events, identifying whether the error was a result of a bad prompt, a model hallucination, or an orchestration failure.

6. Managing Resource Consumption via Credit Systems

While it might seem like a purely financial concern, how an organization manages its AI usage can have direct security implications. Uncontrolled AI usage can lead to “denial of service” scenarios where runaway agentic loops consume all available computational resources or exhaust API quotas, effectively shutting down the development pipeline.

IBM’s approach with its “Bobcoins” system suggests a way to manage this through a structured credit system. By assigning credits to different users or teams, organizations can prevent any single project from monopolizing the AI infrastructure. More importantly, this allows for better monitoring of usage patterns. A sudden, massive spike in credit consumption by a specific agent can serve as an early warning sign that an agent has entered an infinite loop or is being used in an unauthorized manner, allowing security teams to intervene before the impact becomes critical.

7. Standardizing Governance Over Flexibility

The final piece of the puzzle is the choice between a “free-for-all” experimentation model and a standardized governance model. Tools like Cursor or Claude Code are fantastic for individual productivity, placing the user at the center of every prompt and step. However, for an enterprise with thousands of developers, this level of fragmentation can become a security nightmare.

The solution is to provide a platform that offers the flexibility of these advanced tools but wraps them in a layer of corporate governance. This means providing a centralized platform where the models, the sandboxes, the security policies, and the human-in-the-loop protocols are all standardized. When every developer uses a governed platform, the security team can implement global guardrails that apply to everyone, rather than trying to chase down individual developers using unmanaged, shadow AI tools.

The Economic and Operational Impact of Guarded AI

The move toward more structured, guarded AI is not just a defensive maneuver; it is an efficiency play. IBM’s internal data shows that after moving from a small pilot to a massive deployment of 80,000 employees, teams were saving an average of 10 hours per week. That is a staggering amount of reclaimed time that can be redirected toward high-level architecture, complex problem solving, and innovation.

The reason these savings are possible without sacrificing security is the predictability that structure provides. When developers know that the AI is operating within a set of defined boundaries, they can trust its output more readily. They spend less time second-guessing every line of code and more time acting as the “pilot” of a highly capable automated system. The goal is to move from a state of “AI-assisted coding” to “AI-orchestrated development,” where the human’s role shifts from writing syntax to managing intent and verifying outcomes.

As we look toward the future, the divide between “ease of use” and “enterprise reliability” will continue to define the market. Companies that prioritize only speed will likely face significant setbacks when their autonomous agents inevitably encounter the complexities of real-world data and security requirements. Conversely, companies that embrace a structured, multi-layered approach to ai code security will be the ones to truly harness the transformative power of agentic AI.

The era of the “wild west” in AI coding is coming to a close. The next phase of enterprise technology will be defined by how effectively we can build the gates, the fences, and the checkpoints that allow us to run fast without losing control.