The security landscape is evolving at an unprecedented pace, with artificial intelligence (AI) agents playing an increasingly prominent role in various industries. However, as these agents become more widespread, concerns regarding their security have grown exponentially. The recent RSAC 2026 keynotes, which collectively highlighted the pressing need for enhanced AI security, underscore this issue. Microsoft’s Vasu Jakkal emphasized the importance of extending zero-trust principles to AI, while Cisco’s Jeetu Patel advocated for a shift from access control to action control. Similarly, CrowdStrike’s George Kurtz pinpointed AI governance as the biggest gap in enterprise technology, and Splunk’s John Morgan called for an agentic trust and governance model. The convergence of these perspectives underscores a critical challenge: the security of AI agents is a pressing concern that requires immediate attention.

The Monolithic Agent Problem

The default enterprise agent pattern is a monolithic container that bundles multiple functions, including reasoning, calling tools, executing generated code, and storing credentials. This design creates a significant security risk, as every component trusts every other component within the container. OAuth tokens, API keys, and Git credentials are often stored alongside the agent’s code, making it vulnerable to prompt injection attacks. When an attacker gains access to the container, they can easily exfiltrate tokens, spawn sessions, and compromise the entire system. The blast radius of a compromised agent extends far beyond the agent itself, affecting every connected service and potentially leading to catastrophic consequences.

The Prevalence of Shared Service Accounts and Workload Identities

A recent survey conducted by the Cloud Security Alliance (CSA) and Aembit found that 43% of organizations use shared service accounts for agents, while 52% rely on workload identities rather than agent-specific credentials. This lack of segregation creates a significant security risk, as shared service accounts can be compromised, allowing attackers to access sensitive information. Moreover, 68% of organizations struggle to distinguish agent activity from human activity in their logs, making it challenging to detect and respond to potential security incidents. This issue is further exacerbated by the fact that no single function, whether security or development, claims ownership of AI agent access.

The Need for Separation of Concerns

CrowdStrike CTO Elia Zaitsev drew an interesting analogy between securing agents and securing highly privileged users. “Securely managing agent access and activity is essential, just like securely managing access to highly privileged users,” Zaitsev explained. “It’s a defense in depth strategy, requiring a combination of technical and process-based measures to prevent and detect malicious activity.” This perspective highlights the importance of separating concerns, ensuring that each component within the agent is isolated and secure. This can be achieved by implementing a multi-layered approach, including:

- Implementing agent-specific credentials and access controls to limit the blast radius of a compromised agent.

- Using workload identities for agents, rather than shared service accounts, to reduce the attack surface.

- Enabling continuous monitoring and logging of agent activity to detect and respond to potential security incidents.

- Implementing a defense in depth strategy, including a combination of technical and process-based measures, to prevent and detect malicious activity.

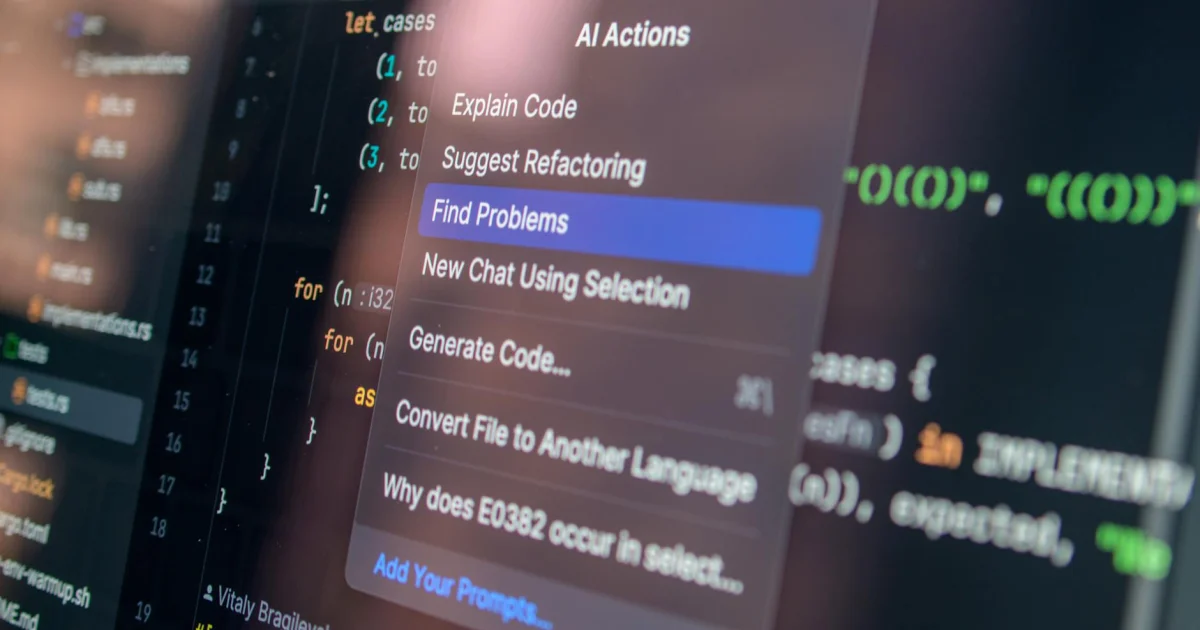

Anthropic’s Managed Agents: A New Approach

Anthropic’s Managed Agents, launched in public beta on April 8, presents a novel approach to AI security. By separating the agent into three components – the brain, the hands, and the sandbox – Anthropic’s solution addresses the monolithic agent problem head-on. The brain represents the reasoning component, the hands represent the execution component, and the sandbox represents the isolated environment where the agent’s code is executed. This design provides a clear separation of concerns, ensuring that each component trusts only the necessary components, reducing the attack surface and improving overall security.

Benefits of Anthropic’s Managed Agents

The benefits of Anthropic’s Managed Agents are multifaceted:

- Improved security: By separating concerns, Anthropic’s solution reduces the attack surface and minimizes the blast radius of a compromised agent.

- Increased trust: The clear separation of components promotes trust between stakeholders, as each component is isolated and secure.

- Enhanced flexibility: Anthropic’s solution allows for easier maintenance and updates, as each component can be modified independently.

- Better compliance: The clear separation of concerns and agent-specific credentials and access controls improve compliance with regulatory requirements.

The Importance of AI Governance

AI governance is a critical aspect of ensuring the security and trustworthiness of AI agents. It involves establishing policies, procedures, and controls to manage the development, deployment, and operation of AI systems. A well-defined AI governance framework helps to:

You may also enjoy reading: 13 Ways to Get the Best Samsung Deal on the Galaxy Watch 8 Classic.

- Establish clear roles and responsibilities for AI development and deployment.

- Define security requirements and protocols for AI systems.

- Implement monitoring and logging to detect potential security incidents.

- Ensure compliance with regulatory requirements.

The Gap Between Deployment Velocity and Security Readiness

The current pace of AI adoption often leaves security teams struggling to keep up. The break-out time, or the time it takes for an attacker to gain access to a compromised agent, has decreased to 29 minutes. The fastest observed break-out time was a mere 27 seconds. This highlights the need for a more proactive approach to AI security, one that prioritizes security readiness alongside deployment velocity. By prioritizing security and implementing measures such as agent-specific credentials, workload identities, and continuous monitoring, organizations can reduce the risk of security incidents and ensure the long-term trustworthiness of their AI agents.

Conclusion

The security of AI agents is a pressing concern that requires immediate attention. The monolithic agent problem, coupled with the prevalence of shared service accounts and workload identities, creates a significant security risk. Anthropic’s Managed Agents present a novel approach to AI security, separating concerns and reducing the attack surface. By prioritizing security readiness and implementing measures such as agent-specific credentials and continuous monitoring, organizations can ensure the long-term trustworthiness of their AI agents. As the AI landscape continues to evolve, it is essential to prioritize security and establish clear governance frameworks to manage the development, deployment, and operation of AI systems.

Recommendations

To address the security concerns surrounding AI agents, consider the following:

- Implement agent-specific credentials and access controls to limit the blast radius of a compromised agent.

- Use workload identities for agents, rather than shared service accounts, to reduce the attack surface.

- Enable continuous monitoring and logging of agent activity to detect and respond to potential security incidents.

- Implement a defense in depth strategy, including a combination of technical and process-based measures, to prevent and detect malicious activity.

- Establish a clear AI governance framework to manage the development, deployment, and operation of AI systems.

Future Research Directions

As the AI landscape continues to evolve, it is essential to prioritize security and establish clear governance frameworks. Future research should focus on:

- Developing more effective methods for separating concerns and reducing the attack surface.

- Improving the detection and response capabilities for AI security incidents.

- Establishing clear roles and responsibilities for AI development and deployment.

- Developing more effective AI governance frameworks to manage the development, deployment, and operation of AI systems.