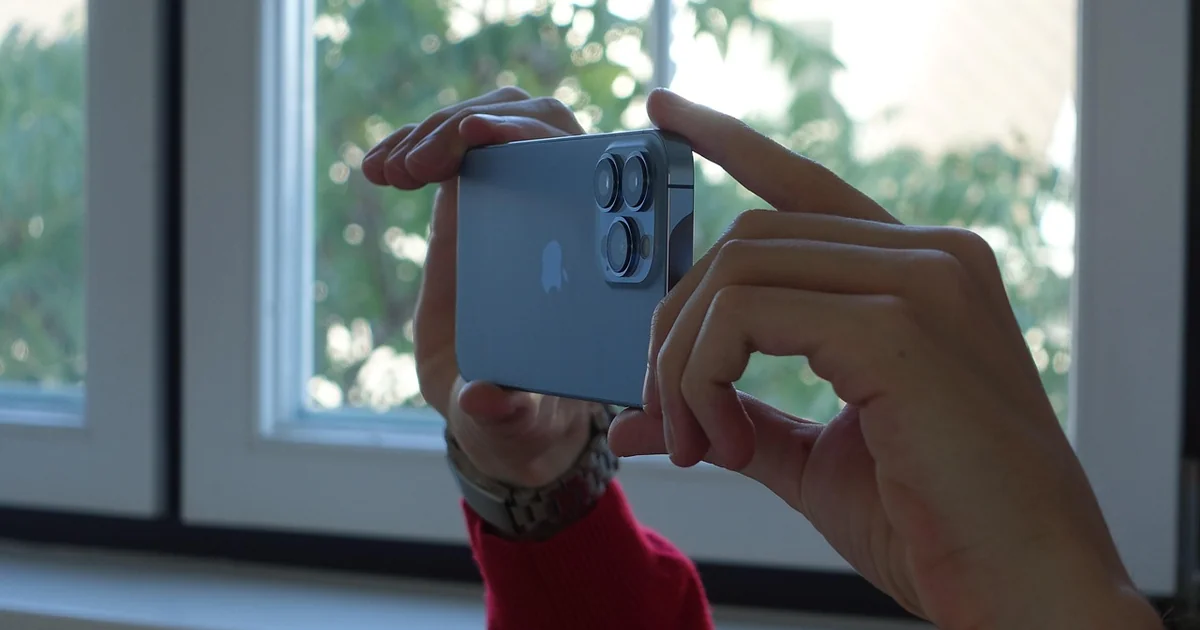

The landscape of mobile photography is shifting from simple pixel counting to a sophisticated dance between physical glass and silicon intelligence. For years, smartphone enthusiasts have waited for a leap that feels truly transformative rather than just incremental. Recent industry whispers suggest that we are standing on the precipice of such a moment. With the upcoming release cycle, the rumored iphone 18 pro camera system promises to bridge the gap between handheld convenience and professional-grade optical performance through a massive overhaul of hardware components.

The Era of Physical Optical Control

For a long time, mobile photography has relied heavily on computational photography to mimic the effects of large-sensor cameras. While software can do wonders, it often struggles with the natural transitions of light and the organic fall-off of focus. The upcoming hardware shifts aim to address these fundamental limitations by introducing mechanical changes that were previously thought to be too bulky for a slim smartphone chassis.

Imagine a photographer standing in a dimly lit cobblestone street in Europe. They want to capture a portrait where the subject pops against a creamy, blurred background, but the current software-driven Portrait Mode occasionally makes mistakes, such as cutting off stray hairs or failing to recognize the edge of a jacket. This is the exact problem that physical hardware upgrades seek to solve. By moving away from purely mathematical approximations of depth, the next generation of devices aims to provide authentic optical bokeh.

1. The Introduction of Variable Aperture Technology

One of the most significant rumors circulating involves the implementation of a variable aperture on the main sensor. In traditional photography, the aperture is the opening in the lens that controls how much light enters the camera and how much of the image is in focus. Currently, most smartphones have a fixed aperture, meaning the light intake and depth of field are essentially locked in.

A variable aperture would allow the iphone 18 pro camera to physically adjust its opening. If you are shooting a bright, sun-drenched landscape, the aperture can narrow to prevent overexposure and increase sharpness across the frame. Conversely, in a low-light setting, the aperture can swing wide to swallow as much light as possible, reducing the need for artificial brightening that often introduces digital noise.

This capability offers a level of creative agency that has been missing from the mobile experience. A user could manually dial in a wide aperture to achieve a shallow depth of field for a macro shot of a flower, or tighten it up to ensure a mountain range remains crisp from the foreground to the horizon. This moves the device from a tool that makes decisions for you to a tool that follows your creative intent.

2. Enhanced Telephoto Aperture for Low Light Zoom

Telephoto lenses have historically been the Achilles’ heel of smartphone zoom capabilities. Because these lenses are often smaller and more complex, they struggle to gather enough light compared to the main wide-angle sensor. This often results in grainy, soft images when you try to zoom in during evening hours or indoor events.

The anticipated upgrade for the telephoto module involves a significantly wider aperture. By increasing the diameter of the light-gathering element, the device can maintain high image fidelity even at high magnification levels. This is a game-changer for concert photographers or wildlife enthusiasts who cannot physically move closer to their subject. Instead of relying on digital cropping, which degrades quality, the hardware itself will be capable of capturing more raw data through the zoomed lens.

This upgrade addresses a specific pain point for content creators who rely on long-range shots for storytelling. When the hardware is capable of better light intake, the software doesn’t have to work as hard to “clean up” the image, resulting in a more natural look that lacks the plastic, over-processed sheen seen in many current zoom photos.

3. Advanced Visual Intelligence via Siri Integration

Hardware is only half of the equation; the other half is how we interact with that hardware. Reports indicate that iOS 27 will introduce a specialized mode within the camera interface designed for visual intelligence. This isn’t just about recognizing a face; it is about a deep, contextual understanding of what the lens is seeing.

Think of this as an intelligent layer that sits between your eye and the sensor. If you point your camera at a complex piece of machinery or a foreign language menu, the visual intelligence mode could provide real-time data overlays. This goes beyond simple OCR (Optical Character Recognition) and enters the realm of semantic understanding. The camera becomes a proactive assistant rather than a passive observer.

For the everyday user, this might mean the camera can automatically suggest the best composition based on the golden ratio or identify the specific species of a plant in your garden. For professionals, it could mean the camera recognizes lighting conditions and suggests the optimal aperture and ISO settings before you even press the shutter button. This synergy between the iphone 18 pro camera hardware and AI software creates a feedback loop that makes every shot more intentional.

4. Next-Generation Sensor Architecture

While aperture changes the way light enters, the sensor itself determines how that light is converted into a digital image. There is significant speculation regarding a shift in sensor architecture, possibly moving toward larger individual pixels or a stacked sensor design. A stacked sensor separates the light-sensitive photodiodes from the processing circuitry, allowing for faster readout speeds and better dynamic range.

High dynamic range (HDR) is a concept many users encounter when a bright sky turns white or a shadow becomes a black void in a photo. By utilizing a more advanced sensor architecture, the device can capture a wider spectrum of light intensities simultaneously. This allows for a more nuanced reconstruction of the scene, preserving the subtle textures in a white cloud and the intricate details in a dark shadow.

This technical leap is crucial for the growing community of mobile videographers. High-speed sensor readout is essential for capturing high-frame-rate video without the “rolling shutter” effect, where fast-moving objects appear slanted or distorted. As video becomes a primary medium for social expression, the stability and speed of the sensor become paramount.

5. AI-Driven Post-Processing Tools

The journey of a photo does not end when the shutter clicks. The upcoming software ecosystem is expected to feature robust AI-driven editing tools that feel more like magic than manual labor. We are moving toward a world where “fixing” a photo is as simple as describing what you want to change.

Consider a scenario where you have taken a perfect photo of a family gathering, but a stranger is walking through the background, or a trash can is distracting from the scene. Current tools require careful selection and masking. The new AI tools aim to make this a generative process. You could potentially tap on an unwanted object and tell the device to “remove and fill with background,” and the AI will intelligently reconstruct the scene based on the surrounding context.

You may also enjoy reading: Cole Allen Charged With Attempting to Assassinate Trump.

This level of control democratizes high-end photo editing. It removes the steep learning curve associated with professional software like Lightroom or Photoshop, allowing anyone with a vision to execute it. However, the key will be maintaining realism. The challenge for developers is ensuring that these AI edits don’t result in uncanny, artificial-looking images, but rather seamless corrections that respect the original lighting and texture.

6. Improved Thermal Management for Sustained Performance

One often overlooked aspect of high-end mobile photography is heat. Processing 4K video or high-resolution RAW images generates an immense amount of thermal energy. Many users have experienced the frustration of the camera app closing or the device dimming the screen because it has become too hot to operate.

To support the massive data throughput of the new iphone 18 pro camera system, Apple will likely need to implement more sophisticated thermal management solutions. This could involve new internal vapor chambers or advanced graphite heat spreaders. Ensuring that the device can maintain peak performance during a long video shoot is a hardware necessity that directly impacts user experience.

For a content creator filming a vlog in a warm environment, this is a critical feature. A camera that can run for thirty minutes without throttling its performance is significantly more valuable than one that produces a stunning image for only two minutes before overheating. This focus on sustained performance reflects a shift toward treating the smartphone as a serious production tool.

7. Expanded Computational Depth Mapping

Even with physical aperture changes, software will continue to play a role in how we perceive depth. The next generation of devices is expected to use more advanced LiDAR (Light Detection and Ranging) or similar depth-sensing technology to create highly accurate 3D maps of the environment. This goes beyond just identifying where a person is; it involves understanding the geometry of the entire room.

This granular depth data allows for much more sophisticated effects. For example, in video mode, a highly accurate depth map allows for “cinematic” focus pulls, where the focus shifts smoothly from a foreground object to a background object, mimicking the way a professional cinema lens operates. It also enables more realistic Augmented Reality (AR) experiences, where digital objects can hide behind physical furniture or interact with the lighting of the room.

The integration of this depth data into the standard photo workflow means that even after a photo is taken, you might be able to adjust the focal plane. If you realized later that the focus was slightly off, the depth map could allow you to “refocus” the image in post-production, a feat that was previously impossible with traditional photography.

Bridging the Gap: Hardware vs. Software

A common question among tech enthusiasts is how much of these improvements are truly “new” and how much is just better math. The reality is that the future of imaging lies in the intersection of the two. A better lens is useless if the processor cannot handle the data, and the most powerful AI in the world cannot fix a blurry, poorly lit image captured by a tiny, low-quality sensor.

The rumored upgrades for the iphone 18 pro camera represent a holistic approach. By upgrading the physical aperture, the sensor, and the thermal management, Apple is building a foundation that allows their AI and software tools to reach their full potential. We are seeing a move away from “faking” professional results and toward “enabling” them through superior hardware.

For those deciding whether to upgrade, it is important to look at how you use your device. If you are a casual user who mostly takes snapshots of food or pets, the current generation is likely sufficient. However, if you are a creator, a hobbyist, or someone who views their smartphone as their primary camera, these hardware-centric leaps represent the most significant reason to participate in this upcoming upgrade cycle.

The evolution of mobile imaging is moving toward a state of total transparency, where the technology disappears and only the creativity remains. As these physical and digital advancements converge, the distinction between a professional camera and a smartphone will continue to blur, one shutter click at a time.