Artificial intelligence (AI) has seen remarkable advancements over the years, with AI models growing in size and complexity. Among the innovative approaches gaining traction today is the Mixture of Experts (MoE) architecture. This method optimizes AI model performance by distributing processing tasks across specialized subnetworks known as “experts.” In this article, we’ll explore how this architecture works, the role of sparsity, routing strategies, and its real-world application in the Mixtral model. We’ll also discuss the challenges these systems face and the solutions developed to address them.

Understanding the Mixture of Experts (MoE) Approach

The Mixture of Experts (MoE) is a machine learning technique that divides an AI model into smaller, specialized networks, each focusing on specific tasks. This is akin to assembling a team where each member possesses unique skills suited for particular challenges. The idea isn’t new. It dates back to a groundbreaking 1991 paper that highlighted the benefits of having separate networks specialize in different training cases.

Fast forward to today, and MoE is experiencing a resurgence, particularly among large language models, which utilize this approach to enhance efficiency and effectiveness. At its core, this system comprises several components: an input layer, multiple expert networks, a gating network, and an output layer. The gating network serves as a coordinator, determining which expert networks should be activated for a given task. By doing so, MoE significantly reduces the need to engage the entire network for every operation. This improves performance and reduces computational overhead.

The Role of Sparsity in AI Models

An essential concept within MoE architecture is sparsity, which refers to activating only a subset of experts for each processing task. Instead of engaging all network resources, sparsity ensures that only the relevant experts and their parameters are used. This targeted selection significantly reduces computation needs, especially when dealing with complex, high-dimensional data such as natural language processing tasks. Sparse models excel because they allow for specialized processing. For example, different parts of a sentence may require distinct types of analysis: one expert might be adept at understanding idioms, while another could specialise in parsing complex grammar structures.

By activating only the necessary experts, MoE models can provide more precise and efficient analysis of the input data. This is because each expert can focus on a specific aspect of the data, without being overwhelmed by the complexity of the entire input. As a result, MoE models can process large amounts of data more efficiently, while maintaining high levels of accuracy.

The Art of Routing in MoE Architectures

Routing is another critical component of the Mixture of Experts model. The gating network plays a crucial role here, as it determines which experts to activate for each input. A successful routing strategy ensures that the network is capable of selecting the most suitable experts, optimizing performance and maintaining balance across the network. Typically, the routing process involves predicting which expert will provide the best output for a given input. This prediction is made based on the strength of the connection between the expert and the data.

One popular strategy is the “top-k” routing method, where the k most suitable experts are chosen for a task. In practice, a variant known as “top-2” routing is often used, activating the best two experts, which balances effectiveness and computational cost. By selecting the most suitable experts, MoE models can ensure that each input is processed by the most relevant experts, resulting in more accurate and efficient outputs.

Load Balancing Challenges and Solutions

While MoE models have clear advantages, they also introduce specific challenges, particularly regarding load balancing. The potential issue is that the gating network might consistently select only a few experts, leading to an uneven distribution of tasks. This imbalance can result in some experts being over-utilised and, consequently, over-trained, while others remain underutilised.

To address this challenge, researchers have developed “noisy top-k” gating, a technique introducing Gaussian noise to the selection process. This introduces an element of controlled randomness to the routing process, ensuring that the gating network selects a diverse set of experts for each input. By doing so, MoE models can maintain a balance of tasks across the network, reducing the risk of over-training or under-training any individual expert.

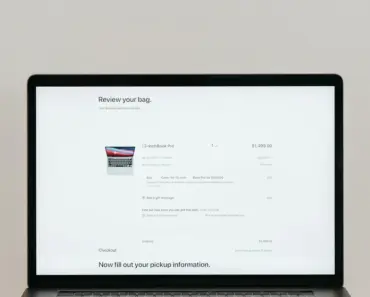

What Actually Happens During an MoE Inference

In a traditional dense model, every layer and every parameter contribute to generating the response. In contrast, MoE models utilize a combination of experts, each of which is responsible for a specific aspect of the input. When an input is processed through an MoE model, the following occurs:

- The input is fed into the gating network, which determines which experts to activate.

- The activated experts process the input, using their specialized knowledge and parameters.

- The output from each expert is weighted and combined, based on the strength of the connection between the expert and the data.

- The final output is generated by the output layer, which combines the weighted outputs from each expert.

Real-World Applications of MoE

MoE models have been successfully applied in various domains, including natural language processing, computer vision, and recommendation systems. For example, in natural language processing, MoE models can be used to improve the accuracy and efficiency of language translation, sentiment analysis, and text classification tasks.

In computer vision, MoE models can be used to improve the accuracy and efficiency of image classification, object detection, and segmentation tasks. In recommendation systems, MoE models can be used to improve the accuracy and efficiency of user preference prediction and item recommendation tasks.

Conclusion

In conclusion, the Mixture of Experts (MoE) architecture is a powerful machine learning technique that optimizes AI model performance by distributing processing tasks across specialized subnetworks, each focusing on specific tasks. By leveraging sparsity and routing strategies, MoE models can provide more precise and efficient analysis of the input data, while reducing computational overhead. While MoE models introduce specific challenges, particularly regarding load balancing, researchers have developed solutions, such as noisy top-k gating, to address these issues. As a result, MoE models have been successfully applied in various domains, improving the accuracy and efficiency of AI systems.